Why I Built This

Hi! I'm Yamura, I love building and exploring stuff online. Recently, I’ve noticed people don’t really use idioms anymore, well except teachers. It's become a lost art, and I want the art to be accessible again! So I made it my mission to make something fun to bring the idioms back to life.

A friend led me to Fireworks.ai, an extremely cool website that gave me $6 in free credits to spend. So, an idea formed in my head. A situation -> idiom LLM. And a bit later: why not just make both English and Dutch? Because English is good to distribute the model, more people speak English. And Dutch because it's my mother tongue.

The Plan

So I went ahead and expanded the plan, made it detailed. I knew I needed a base model and a dataset. I got to work. I asked Claude how to go about it. It said to do LoRa SFT (Supervised Fine-Tuning), which fits the $6 budget perfectly and ensures the model won't lose its existing knowledge of the Dutch language. It also generated a dataset format.

Collecting the Idioms

I first started off by getting high-quality, correct idioms with their meanings. For the English ones, I used theidioms.com and ef.com. I wrote a simple Python script that extracted the idiom and its meaning, combined the two sites' data (and removed duplicates), and wrote it to a JSON file. For the Dutch ones, I did the same, with the primary source being onzetaal.nl.

I got two separate JSON files, one for Dutch, one for English. They looked like the following:

[

{

"idiom": "call it a day",

"meaning": "Stop working on something.",

"source": "ef"

}

]

Generating Training Data

After that, I wrote another script that generated the actual dataset. I used the qwen/qwen3.6-plus:free model on OpenRouter. It asks the LLM to generate for each idiom:

- A Usage Example: A natural sentence using the idiom.

- A Translation: The equivalent idiom or translation in the target language.

- Three Situations: One casual, one formal, and one in the "other" language (e.g., an English situation for a Dutch idiom).

Here is what the generated data looked like:

{

"messages": [

{

"role": "system",

"content": "Je bent een expert in Nederlandse uitdrukkingen en spreekwoorden. Gegeven een situatie, geef je het meest passende Nederlandse idioom of spreekwoord, samen met de betekenis, een gebruiksvoorbeeld en de Engelse vertaling."

},

{

"role": "user",

"content": "Situatie: A student who ignores the syllabus and study deadlines, fails a crucial midterm exam, and then complains to the professor about the unfair difficulty of the test."

},

{

"role": "assistant",

"content": "**Uitdrukking:** eigen schuld, dikke bult\n\n**Betekenis:** Je bedoelt ermee: je hebt dit aan jezelf te wijten, dus ik heb geen medelijden met je.\n\n**Voorbeeld:** 'Je hebt de handleiding expres niet gelezen en nu werkt het apparaat niet; tja, eigen schuld, dikke bult.'\n\n**Engels:** Serves you right"

}

]

}

Training Setup

So now I had a pristine dataset (train.jsonl). I went over to Fireworks and used Qwen 3 14B as my base model. I bumped the LoRA Rank to 16 to give it enough capacity to learn the bilingual mapping, selected 3 epochs, and started the training job.

The most important part came: the name. I chose ScenIdiom, an original mix of 'scene' and 'idiom', referring to mapping scenes -> idioms.

The Results

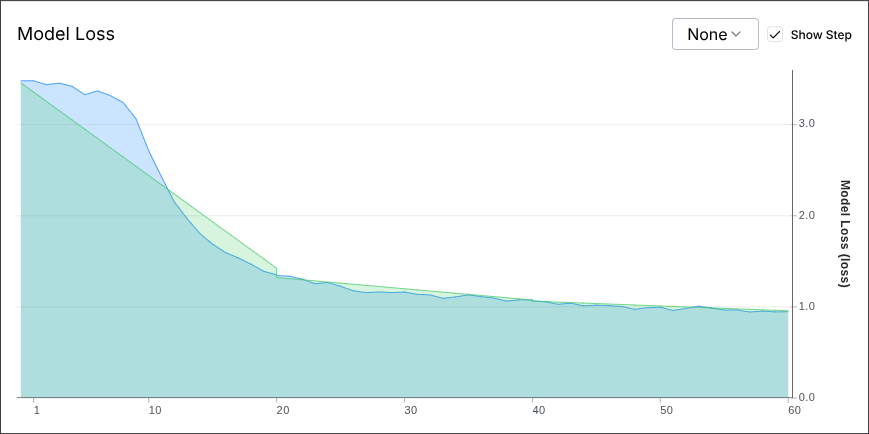

The training ran flawlessly. Because the dataset was so clean and formatted perfectly, the loss curve dropped smoothly without any overfitting. And the best part was training this 14-billion parameter bilingual bad boy cost exactly $1.93.

Released on Hugging Face

I downloaded the weights and pushed the final adapter to Hugging Face so anyone can use it. You can check it out and run it yourself here:

yamura4/scenidiom-qwen3-14b-lora

Hope you enjoyed the article!